Competition : https://www.kaggle.com/c/house-prices-advanced-regression-techniques/overview

Code : https://www.kaggle.com/serigne/stacked-regressions-top-4-on-leaderboard

Description

Feature Engineering Process

- 데이터를 순차적으로 진행하여 결측치 대입

- 범주형으로 보이는 일부 수치형 변수 변환

- order 정보를 가지고 있는 일부 카테고리형 변수 Label Encoding

- Skewed된 변수에 대한 Box Cox Transformation : 리더보드와 cross-validation에서 모두 약간 더 나은 결과를 제공

- 범주형 변수에 대한 더미변수 가져오기

#import some necessary librairies

import numpy as np # linear algebra

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

%matplotlib inline

import matplotlib.pyplot as plt # Matlab-style plotting

import seaborn as sns

color = sns.color_palette()

sns.set_style('darkgrid')

import warnings

def ignore_warn(*args, **kwargs):

pass

warnings.warn = ignore_warn #ignore annoying warning (from sklearn and seaborn)

from scipy import stats

from scipy.stats import norm, skew #for some statistics

pd.set_option('display.float_format', lambda x: '{:.3f}'.format(x)) #Limiting floats output to 3 decimal points

from subprocess import check_output

print(check_output(["ls", "../input"]).decode("utf8")) #check the files available in the directory

train = pd.read_csv('../input/train.csv')

test = pd.read_csv('../input/test.csv')

train.head(5)

#check the numbers of samples and features

print("The train data size before dropping Id feature is : {} ".format(train.shape))

print("The test data size before dropping Id feature is : {} ".format(test.shape))

#Save the 'Id' column

train_ID = train['Id']

test_ID = test['Id']

#Now drop the 'Id' colum since it's unnecessary for the prediction process.

train.drop("Id", axis = 1, inplace = True)

test.drop("Id", axis = 1, inplace = True)

#check again the data size after dropping the 'Id' variable

print("\nThe train data size after dropping Id feature is : {} ".format(train.shape))

print("The test data size after dropping Id feature is : {} ".format(test.shape))

>>>

The train data size before dropping Id feature is : (1460, 81)

The test data size before dropping Id feature is : (1459, 80)

The train data size after dropping Id feature is : (1460, 80)

The test data size after dropping Id feature is : (1459, 79)Data Processing

1. Outliers

fig, ax = plt.subplots()

ax.scatter(x = train['GrLivArea'], y = train['SalePrice'])

plt.ylabel('SalePrice', fontsize=13)

plt.xlabel('GrLivArea', fontsize=13)

plt.show()

#Deleting outliers

train = train.drop(train[(train['GrLivArea']>4000) & (train['SalePrice']<300000)].index)

#Check the graphic again

fig, ax = plt.subplots()

ax.scatter(train['GrLivArea'], train['SalePrice'])

plt.ylabel('SalePrice', fontsize=13)

plt.xlabel('GrLivArea', fontsize=13)

plt.show()

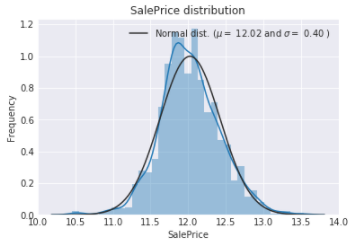

2. Target Variable

sns.distplot(train['SalePrice'] , fit=norm);

# Get the fitted parameters used by the function

(mu, sigma) = norm.fit(train['SalePrice'])

print( '\n mu = {:.2f} and sigma = {:.2f}\n'.format(mu, sigma))

#Now plot the distribution

plt.legend(['Normal dist. ($\mu=$ {:.2f} and $\sigma=$ {:.2f} )'.format(mu, sigma)],

loc='best')

plt.ylabel('Frequency')

plt.title('SalePrice distribution')

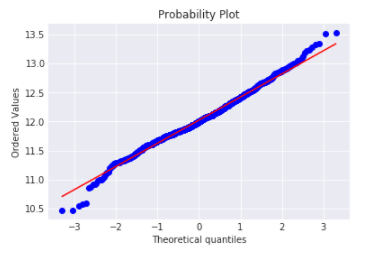

#Get also the QQ-plot

fig = plt.figure()

res = stats.probplot(train['SalePrice'], plot=plt)

plt.show()

>>>

mu = 180932.92 and sigma = 79467.79

target variable은 right skewed 되어있는데, 보통 모델은 정규분포의 데이터를 좋아하기 때문에 이를 변환해줄 필요가 있다.

Log-transformation of the target variable

#We use the numpy fuction log1p which applies log(1+x) to all elements of the column

train["SalePrice"] = np.log1p(train["SalePrice"])

#Check the new distribution

sns.distplot(train['SalePrice'] , fit=norm);

# Get the fitted parameters used by the function

(mu, sigma) = norm.fit(train['SalePrice'])

print( '\n mu = {:.2f} and sigma = {:.2f}\n'.format(mu, sigma))

#Now plot the distribution

plt.legend(['Normal dist. ($\mu=$ {:.2f} and $\sigma=$ {:.2f} )'.format(mu, sigma)],

loc='best')

plt.ylabel('Frequency')

plt.title('SalePrice distribution')

#Get also the QQ-plot

fig = plt.figure()

res = stats.probplot(train['SalePrice'], plot=plt)

plt.show()

>>>

mu = 12.02 and sigma = 0.40

3. Feature Engineering

ntrain = train.shape[0]

ntest = test.shape[0]

y_train = train.SalePrice.values

all_data = pd.concat((train, test)).reset_index(drop=True)

all_data.drop(['SalePrice'], axis=1, inplace=True)

print("all_data size is : {}".format(all_data.shape))

>>>

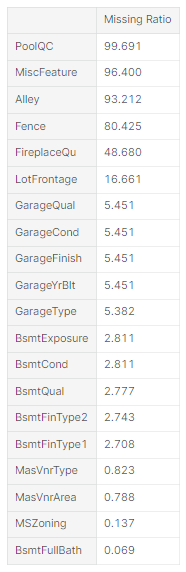

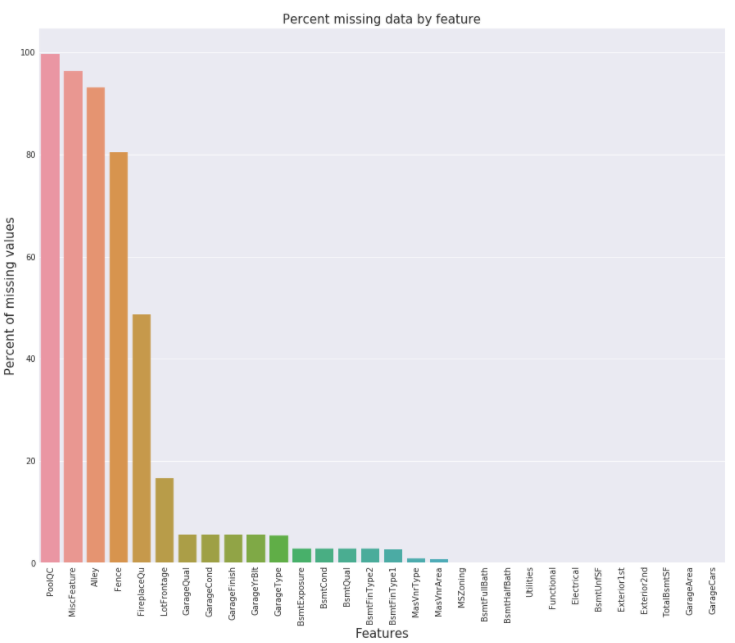

all_data size is : (2917, 79)1. Missing Data

all_data_na = (all_data.isnull().sum() / len(all_data)) * 100

all_data_na = all_data_na.drop(all_data_na[all_data_na == 0].index).sort_values(ascending=False)[:30]

missing_data = pd.DataFrame({'Missing Ratio' :all_data_na})

missing_data.head(20)

f, ax = plt.subplots(figsize=(15, 12))

plt.xticks(rotation='90')

sns.barplot(x=all_data_na.index, y=all_data_na)

plt.xlabel('Features', fontsize=15)

plt.ylabel('Percent of missing values', fontsize=15)

plt.title('Percent missing data by feature', fontsize=15)

2. Data Correlation

corrmat = train.corr()

plt.subplots(figsize=(12,9))

sns.heatmap(corrmat, vmax=0.9, square=True)

위 탐색을 기반으로 결측치 처리

- PoolQC : data description에서 NA는 "No Pool"을 의미한다고 한다. 일반적으로 Pool이 집에 없다는 얘기가 된다.

all_data["PoolQC"] = all_data["PoolQC"].fillna("None")- MiscFeature : NA는 "no misc feature(기타 기능)"를 의미함

all_data["MiscFeature"] = all_data["MiscFeature"].fillna("None")

- Alley : NA는 "no alley access(골목 접근성이 없음)"를 의미함

all_data["Alley"] = all_data["Alley"].fillna("None")- Fence : NA means "no fence(펜스가 없음)"

all_data["Fence"] = all_data["Fence"].fillna("None")- FireplaceQu : data description says NA means "no fireplace(난로 없음)"

all_data["FireplaceQu"] = all_data["FireplaceQu"].fillna("None")- LotFrontage : 집 부지와 연결된 각 거리의 면적은 이웃의 다른 집과 면적이 비슷할 가능성이 높기 때문에 이웃의 중앙값 LotFrontage로 결측값을 채울 수 있습니다.

#Group by neighborhood and fill in missing value by the median LotFrontage of all the neighborhood

all_data["LotFrontage"] = all_data.groupby("Neighborhood")["LotFrontage"].transform(

lambda x: x.fillna(x.median()))- GarageType, GarageFinish, GarageQual and GarageCond : Replacing missing data with None

for col in ('GarageType', 'GarageFinish', 'GarageQual', 'GarageCond'):

all_data[col] = all_data[col].fillna('None')- GarageYrBlt, GarageArea and GarageCars : Replacing missing data with 0 (Since No garage = no cars in such garage.)

for col in ('GarageYrBlt', 'GarageArea', 'GarageCars'):

all_data[col] = all_data[col].fillna(0)- BsmtFinSF1, BsmtFinSF2, BsmtUnfSF, TotalBsmtSF, BsmtFullBath and BsmtHalfBath : missing values are likely zero for having no basement

for col in ('BsmtFinSF1', 'BsmtFinSF2', 'BsmtUnfSF','TotalBsmtSF', 'BsmtFullBath', 'BsmtHalfBath'):

all_data[col] = all_data[col].fillna(0)- BsmtQual, BsmtCond, BsmtExposure, BsmtFinType1 and BsmtFinType2 : For all these categorical basement-related features, NaN means that there is no basement.

for col in ('BsmtQual', 'BsmtCond', 'BsmtExposure', 'BsmtFinType1', 'BsmtFinType2'):

all_data[col] = all_data[col].fillna('None')- MasVnrArea and MasVnrType : NA most likely means no masonry veneer(석조 베니어판) for these houses. We can fill 0 for the area and None for the type.

all_data["MasVnrType"] = all_data["MasVnrType"].fillna("None")

all_data["MasVnrArea"] = all_data["MasVnrArea"].fillna(0)- MSZoning (The general zoning classification) : 대부분의 값이 'RL'로 체워져 있어서, 결측치를 'RL'로 대체

all_data['MSZoning'] = all_data['MSZoning'].fillna(all_data['MSZoning'].mode()[0])

- Utilities : 하나의 'NoSeWa'와 2개의 결측치를 제외하고는 모두 'AllPub' 값을 가지고, 학습 데이터에는 'NoSeWa'값이 없다. 이 feature는 예측에 도움을 주지 않기 때문에 제거한다.

all_data = all_data.drop(['Utilities'], axis=1)

- Functional : data description says NA means typical

all_data["Functional"] = all_data["Functional"].fillna("Typ")

- Electrical : 하나의 결측치를 가지고, 대부분이 'SBrkr' 값을 가지기 때문에 이 값으로 대체해준다.

all_data['Electrical'] = all_data['Electrical'].fillna(all_data['Electrical'].mode()[0])- KitchenQual: 1개의 결측치를 최대 빈도수를 보이는 값으로 대체

all_data['KitchenQual'] = all_data['KitchenQual'].fillna(all_data['KitchenQual'].mode()[0])

- Exterior1st and Exterior2nd : 각각 1개의 결측치를 최대 빈도수를 보이는 값으로 대체

all_data['Exterior1st'] = all_data['Exterior1st'].fillna(all_data['Exterior1st'].mode()[0])

all_data['Exterior2nd'] = all_data['Exterior2nd'].fillna(all_data['Exterior2nd'].mode()[0])- SaleType : Fill in again with most frequent which is "WD"

all_data['SaleType'] = all_data['SaleType'].fillna(all_data['SaleType'].mode()[0])

- MSSubClass : Na most likely means No building class. We can replace missing values with None

all_data['MSSubClass'] = all_data['MSSubClass'].fillna("None")Check remaining missing values if any

all_data_na = (all_data.isnull().sum() / len(all_data)) * 100

all_data_na = all_data_na.drop(all_data_na[all_data_na == 0].index).sort_values(ascending=False)

missing_data = pd.DataFrame({'Missing Ratio' :all_data_na})

missing_data.head()

4. More features engineering

- 범주형으로 보이는 일부 수치형 변수 변환

#MSSubClass=The building class

all_data['MSSubClass'] = all_data['MSSubClass'].apply(str)

#Changing OverallCond into a categorical variable

all_data['OverallCond'] = all_data['OverallCond'].astype(str)

#Year and month sold are transformed into categorical features.

all_data['YrSold'] = all_data['YrSold'].astype(str)

all_data['MoSold'] = all_data['MoSold'].astype(str)- order 정보를 가지고 있는 일부 카테고리형 변수 Label Encoding

from sklearn.preprocessing import LabelEncoder

cols = ('FireplaceQu', 'BsmtQual', 'BsmtCond', 'GarageQual', 'GarageCond',

'ExterQual', 'ExterCond','HeatingQC', 'PoolQC', 'KitchenQual', 'BsmtFinType1',

'BsmtFinType2', 'Functional', 'Fence', 'BsmtExposure', 'GarageFinish', 'LandSlope',

'LotShape', 'PavedDrive', 'Street', 'Alley', 'CentralAir', 'MSSubClass', 'OverallCond',

'YrSold', 'MoSold')

# process columns, apply LabelEncoder to categorical features

for c in cols:

lbl = LabelEncoder()

lbl.fit(list(all_data[c].values))

all_data[c] = lbl.transform(list(all_data[c].values))

# shape

print('Shape all_data: {}'.format(all_data.shape))

>>>

Shape all_data: (2917, 78)- 파생변수 생성

- area와 연관된 feature들은 집 가격을 결정하는데 매우 중요한 요소이기 대문에, basement에 대한 total area 변수를 생성한다.

# Adding total sqfootage feature

all_data['TotalSF'] = all_data['TotalBsmtSF'] + all_data['1stFlrSF'] + all_data['2ndFlrSF']- Skewed된 변수에 대한 Box Cox Transformation

numeric_feats = all_data.dtypes[all_data.dtypes != "object"].index

# Check the skew of all numerical features

skewed_feats = all_data[numeric_feats].apply(lambda x: skew(x.dropna())).sort_values(ascending=False)

print("\nSkew in numerical features: \n")

skewness = pd.DataFrame({'Skew' :skewed_feats})

skewness.head(10)

skewness = skewness[abs(skewness) > 0.75]

print("There are {} skewed numerical features to Box Cox transform".format(skewness.shape[0]))

from scipy.special import boxcox1p

skewed_features = skewness.index

lam = 0.15 # lambda = 0 이면 log1p와 같아진다.

for feat in skewed_features:

all_data[feat] = boxcox1p(all_data[feat], lam)

>>>

There are 59 skewed numerical features to Box Cox transform- 범주형 변수에 대한 더미변수 가져오기

all_data = pd.get_dummies(all_data)

train = all_data[:ntrain]

test = all_data[ntrain:]Modelling

from sklearn.linear_model import ElasticNet, Lasso, BayesianRidge, LassoLarsIC

from sklearn.ensemble import RandomForestRegressor, GradientBoostingRegressor

from sklearn.kernel_ridge import KernelRidge

from sklearn.pipeline import make_pipeline

from sklearn.preprocessing import RobustScaler

from sklearn.base import BaseEstimator, TransformerMixin, RegressorMixin, clone

from sklearn.model_selection import KFold, cross_val_score, train_test_split

from sklearn.metrics import mean_squared_error

import xgboost as xgb

import lightgbm as lgbDefine a cross validation strategy

n_folds = 5

def rmsle_cv(model):

kf = KFold(n_folds, shuffle=True, random_state=42).get_n_splits(train.values)

rmse= np.sqrt(-cross_val_score(model, train.values, y_train, scoring="neg_mean_squared_error", cv = kf))

return(rmse)Base models

- LASSO Regression

Lasso Regression은 이상치에 매우 민감하기 때문에 Robust scale을 진행해준다.

lasso = make_pipeline(RobustScaler(), Lasso(alpha =0.0005, random_state=1))

- Elastic Net Regression

이상치에 매우 민감하기 때문에 Robust scale을 진행

ENet = make_pipeline(RobustScaler(), ElasticNet(alpha=0.0005, l1_ratio=.9, random_state=3))

- Kernel Ridge Regression

KRR = KernelRidge(alpha=0.6, kernel='polynomial', degree=2, coef0=2.5)

- Gradient Boosting Regression

huber loss가 outlier에 대해 robust하게 해준다.

GBoost = GradientBoostingRegressor(n_estimators=3000, learning_rate=0.05,

max_depth=4, max_features='sqrt',

min_samples_leaf=15, min_samples_split=10,

loss='huber', random_state =5)

- XGBoost

model_xgb = xgb.XGBRegressor(colsample_bytree=0.4603, gamma=0.0468,

learning_rate=0.05, max_depth=3,

min_child_weight=1.7817, n_estimators=2200,

reg_alpha=0.4640, reg_lambda=0.8571,

subsample=0.5213, silent=1,

random_state =7, nthread = -1)

- LightGBM

model_lgb = lgb.LGBMRegressor(objective='regression',num_leaves=5,

learning_rate=0.05, n_estimators=720,

max_bin = 55, bagging_fraction = 0.8,

bagging_freq = 5, feature_fraction = 0.2319,

feature_fraction_seed=9, bagging_seed=9,

min_data_in_leaf =6, min_sum_hessian_in_leaf = 11)Base models scores

Lasso Regression

score = rmsle_cv(lasso)

print("\nLasso score: {:.4f} ({:.4f})\n".format(score.mean(), score.std()))

>>>

Lasso score: 0.1115 (0.0074)

score = rmsle_cv(ENet)

print("ElasticNet score: {:.4f} ({:.4f})\n".format(score.mean(), score.std()))

>>>

ElasticNet score: 0.1116 (0.0074)Kernel Ridge Regression

score = rmsle_cv(KRR)

print("Kernel Ridge score: {:.4f} ({:.4f})\n".format(score.mean(), score.std()))

>>>

Kernel Ridge score: 0.1153 (0.0075)Gradient Boosting Regression

score = rmsle_cv(GBoost)

print("Gradient Boosting score: {:.4f} ({:.4f})\n".format(score.mean(), score.std()))

>>>

Gradient Boosting score: 0.1177 (0.0080)score = rmsle_cv(model_xgb)

print("Xgboost score: {:.4f} ({:.4f})\n".format(score.mean(), score.std()))

>>>

Xgboost score: 0.1161 (0.0079)score = rmsle_cv(model_lgb)

print("LGBM score: {:.4f} ({:.4f})\n" .format(score.mean(), score.std()))

>>>

LGBM score: 0.1157 (0.0067)Stacking Models

Simplest Stacking approach : Averaging base models

class AveragingModels(BaseEstimator, RegressorMixin, TransformerMixin):

def __init__(self, models):

self.models = models

# we define clones of the original models to fit the data in

def fit(self, X, y):

self.models_ = [clone(x) for x in self.models]

# Train cloned base models

for model in self.models_:

model.fit(X, y)

return self

#Now we do the predictions for cloned models and average them

def predict(self, X):

predictions = np.column_stack([

model.predict(X) for model in self.models_

])

return np.mean(predictions, axis=1)averaged_models = AveragingModels(models = (ENet, GBoost, KRR, lasso))

score = rmsle_cv(averaged_models)

print(" Averaged base models score: {:.4f} ({:.4f})\n".format(score.mean(), score.std()))

>>>

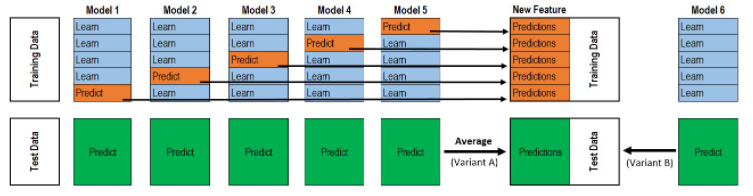

Averaged base models score: 0.1091 (0.0075)Less simple Stacking : Adding a Meta-model

- 학습데이터를 2개의 disjoint한 set으로 나눈다 (here train and .holdout )

- 몇 base 모델들을 학습한다 (train)

- 이 모델들을 검증한다 (holdout)

- 3번에서 나온 predictions을 input 으로 하고, correct responses(target variable)을 output으로 하여 higher level learner을 학습 하는것을 meta-model이라고 한다.

class StackingAveragedModels(BaseEstimator, RegressorMixin, TransformerMixin):

def __init__(self, base_models, meta_model, n_folds=5):

self.base_models = base_models

self.meta_model = meta_model

self.n_folds = n_folds

# We again fit the data on clones of the original models

def fit(self, X, y):

self.base_models_ = [list() for x in self.base_models]

self.meta_model_ = clone(self.meta_model)

kfold = KFold(n_splits=self.n_folds, shuffle=True, random_state=156)

# Train cloned base models then create out-of-fold predictions

# that are needed to train the cloned meta-model

out_of_fold_predictions = np.zeros((X.shape[0], len(self.base_models)))

for i, model in enumerate(self.base_models):

for train_index, holdout_index in kfold.split(X, y):

instance = clone(model)

self.base_models_[i].append(instance)

instance.fit(X[train_index], y[train_index])

y_pred = instance.predict(X[holdout_index])

out_of_fold_predictions[holdout_index, i] = y_pred

# Now train the cloned meta-model using the out-of-fold predictions as new feature

self.meta_model_.fit(out_of_fold_predictions, y)

return self

#Do the predictions of all base models on the test data and use the averaged predictions as

#meta-features for the final prediction which is done by the meta-model

def predict(self, X):

meta_features = np.column_stack([

np.column_stack([model.predict(X) for model in base_models]).mean(axis=1)

for base_models in self.base_models_ ])

return self.meta_model_.predict(meta_features)Stacking Averaged models Score

- average ENet, KRR, GBoost and add Lasso as meta-model

stacked_averaged_models = StackingAveragedModels(base_models = (ENet, GBoost, KRR),

meta_model = lasso)

score = rmsle_cv(stacked_averaged_models)

print("Stacking Averaged models score: {:.4f} ({:.4f})".format(score.mean(), score.std()))

>>>

Stacking Averaged models score: 0.1085 (0.0074)Ensembling StackedRegressor, XGBoost and LightGBM

def rmsle(y, y_pred):

return np.sqrt(mean_squared_error(y, y_pred))StactedRegressor

stacked_averaged_models.fit(train.values, y_train)

stacked_train_pred = stacked_averaged_models.predict(train.values)

stacked_pred = np.expm1(stacked_averaged_models.predict(test.values))

print(rmsle(y_train, stacked_train_pred))

>>>

0.0781571937916XGBoost

model_xgb.fit(train, y_train)

xgb_train_pred = model_xgb.predict(train)

xgb_pred = np.expm1(model_xgb.predict(test))

print(rmsle(y_train, xgb_train_pred))

>>>

0.0785165142425LightGBM

model_lgb.fit(train, y_train)

lgb_train_pred = model_lgb.predict(train)

lgb_pred = np.expm1(model_lgb.predict(test.values))

print(rmsle(y_train, lgb_train_pred))

>>>

0.0716757468834'''RMSE on the entire Train data when averaging'''

print('RMSLE score on train data:')

print(rmsle(y_train,stacked_train_pred*0.70 +

xgb_train_pred*0.15 + lgb_train_pred*0.15 ))

>>>

RMSLE score on train data:

0.07521904645430.7, 0.15, 0.15의 비율은 작성자 임의 설정

ensemble = stacked_pred*0.70 + xgb_pred*0.15 + lgb_pred*0.15

'Kaggle' 카테고리의 다른 글

| New York City Taxi Trip Duration 코드리뷰 (0) | 2022.02.01 |

|---|---|

| Bike Sharing Demand 코드리뷰 (0) | 2022.01.25 |

| House Prices - Advanced Regression Techniques 코드리뷰 (0) | 2022.01.17 |

댓글